Strengthening Digital Twins with Unit-Level Traceability

Digital twins (advanced digital twin technology in manufacturing) have the potential to transform how manufacturers design, manage, and optimize products and production systems. Technology acts as a bridge between the physical and digital worlds, enabling near real-time insights. simulation capabilities, and advanced analytics when supported by robust data infrastructure and industrial data integration.

However, the reliability of digital twin implementations depends heavily on the accuracy, integrity, and traceability of the underlying data (data governance and data integrity in manufacturing systems).

Unit-level traceability is a key enabler in addressing this challenge. By tracking individual components throughout the manufacturing process, it supports alignment between digital representations and their physical counterparts. When combined with strong data governance and multi-source data integration, organizations can trace signals back to their originating data sources and transformations.

This approach helps build resilient data infrastructures, enhances quality control in manufacturing, and supports verification of digital twin accuracy for more reliable and data-driven manufacturing operations.

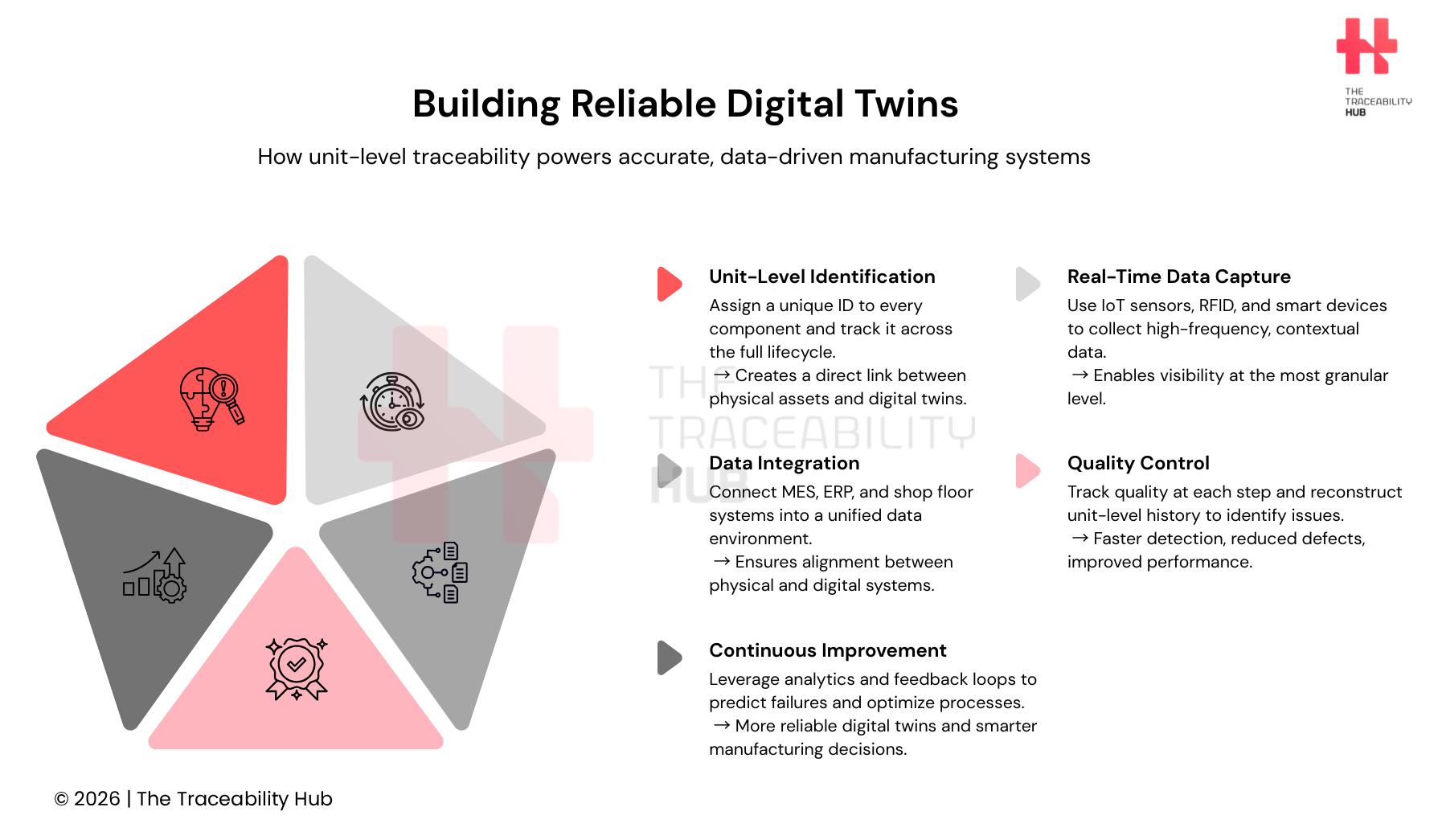

Building Reliable Digital Twins

Understanding Unit-Level Traceability in Digital Twins

What Unit-Level Traceability Means in Manufacturing

Unit-level identification provides the highest granularity in tracking (unit-level tracking vs batch traceability), enabling manufacturers to monitor individual products throughout their lifecycle where systems and data integration are allowed. This approach assigns a unique identifier to each tracked unit, turning every product into an individually identifiable item from manufacturing through distribution and, in some cases, final sale.

High-value and regulated items such as aerospace components, medical devices, and precision instruments often require this level of detail to meet regulatory compliance requirements and support quality assurance and warranty management.

Traceability systems (manufacturing traceability systems and product traceability platforms) are designed to track parts and products throughout the manufacturing process, from the moment raw materials enter the factory to when finished goods are shipped. These systems may capture information such as inspection results, assembly details, and time spent at each production stage (shop floor visibility and production monitoring systems).

The choice between batch-level and unit-level traceability depends on product value, regulatory requirements, and risk tolerance. In practice, many manufacturers adopt hybrid approaches, applying unit-level tracking to critical components while maintaining batch-level traceability for bulk materials (hybrid traceability models in manufacturing).

How Digital Twins Track Individual Components

Digital twins enable continuous monitoring, simulation, and analysis of physical assets across different stages of their lifecycle. When supported by appropriate infrastructure, they can enable near real-time and bidirectional data exchange (real-time data synchronization) between a physical object and its virtual representation, supporting alignment between simulated conditions and the physical system.

At a more granular level, component twins—also referred to as part twins (component-level digital twins) – focus on replicating individual components and providing detailed insight into their performance and condition. For example, a component twin may use data collected from IoT sensors on the physical asset to represent a valve in an oil pipeline, a motor in a wind turbine, or a turbocharger in a vehicle.

Depending on system design, digital twins (link to Digital Twins in Supply Chains: Building the Foundation for Intelligent Systems) can reflect the condition of individual components – such as sensors, circuits, and capacitors – in near real time or at defined update intervals. When combined with advanced analytics, they can support the identification of early warning signs and enable predictive maintenance strategies.

Data Requirements for Unit-Level Tracking

Unit-level traceability relies on the integration of multiple technologies to uniquely identify, monitor, and record the movement of individual items (end-to-end supply chain visibility). Depending on the case of use, these systems may combine tools such as RFID, smart labels and NFC tags, environmental sensors, geolocation technologies (e.g., GPS, BLE, or UWB), and distributed data infrastructures.

RFID tags use wireless communication to enable the identification of items at specific checkpoints, where readers capture identifiers that link to information about a product’s movement, handling, and processing history (traceability data systems). NFC tags operate on similar principles but are typically designed for short-range interaction, making them suitable for applications such as consumer engagement and product authentication when combined with secure digital systems.

Additional technologies, including environmental sensors and geolocation devices, can provide contextual data, enhancing visibility across the supply chain, and improving supply chain transparency.

Blockchain and other distributed ledger technologies (blockchain traceability systems) can be used to store or anchor traceability records in a tamper-resistant and auditable manner. However, their effectiveness depends on the quality and integrity of the data recorded, as well as the available budget, the overall system design, interoperability and governance.

Building the Data Infrastructure for Traceability

IoT Sensors and Data Collection at Unit Level

Industrial IoT (IIoT) sensors (smart sensors in manufacturing environments) are deployed across machines, production units, and even specific components – such as spindles – to capture near real-time or high-frequency production and condition data. These ruggedized smart sensors collect operational information and transmit data to edge devices or gateways, enabling continuous monitoring and analysis. In high-frequency applications, such as vibration monitoring, they can generate large volumes of data, including thousands of data points per minute (high-frequency industrial data streams).

Equipment status sensors monitor parameters such as machine on/off state, operating speed, motor rotation, and spindle performance. Condition monitoring sensors capture variables including temperature, vibration, and pressure, supporting performance analysis and predictive maintenance strategies.

Environmental sensors track surrounding conditions, such as temperature, acceleration, humidity, air pressure and airborne contaminants, helping ensure process stability and compliance in controlled manufacturing environments.

In addition, proximity sensors detect the presence or distance of workers relative to machines or robotic systems, supporting industrial safety mechanisms when integrated into broader safety architectures.

Integrating Multi-Source Manufacturing Data

Manufacturers generate data from a wide range of sources, including production equipment, shop floor sensors, enterprise resource planning (ERP) systems, manufacturing execution systems (MES), customer relationship management (CRM) platforms, and IoT devices (multi-source manufacturing data ecosystem).

Business systems capture information related to supply chains, inventory levels, sales, and customer demand, while manufacturing technologies provide detailed insights into production processes, including machine output and operational status (manufacturing operations data).

To make this data usable, integration across these sources is essential. Data virtualization can create a unified view of distributed data without requiring it to be physically moved. A virtualization layer queries multiple systems and combines the results, enabling access to integrated information in near real time where system architecture and latency allow – real-time data access and interoperability.

Real-Time Data Synchronization Between Physical and Digital Systems

Real-time digital twin’s systems often rely on timely or near-real-time access to data but maintaining synchronization between physical and digital systems presents several challenges. Variability in physical environments, uncertainty in measurements, differences in scale between physical and virtual representations, and the heterogeneity of data sources can all impact synchronization accuracy.

To address these challenges, synchronization mechanisms typically involve transmitting the state of physical assets over networks and applying techniques such as buffering, time alignment, and latency compensation. These systems are generally designed to operate in a non-blocking manner, minimizing any impact on the performance and throughput of the physical processes they monitor (high-performance manufacturing systems).

Managing Heterogeneous Data from Production Units

Industrial data is inherently complex and heterogeneous, originating from a wide range of sources and formats across the manufacturing environment (industrial data management challenges). At the process level, data includes inputs from sensors and actuators measuring variables such as temperature, pressure, and flow rate.

At the control level, data includes setpoints, control logic states, and system status information generated by automation systems. At the supervisory level, systems capture alarms, events, and historical process data, supporting production monitoring and operational decision-making.

Beyond the shop floor, manufacturing operations data encompasses areas such as maintenance, inventory, quality management, energy consumption, and production performance (end-to-end manufacturing visibility).

To manage this complexity, organizations adopt standardization strategies across data models, formats, and tools. When combined with strong data governance and interoperability frameworks, these approaches can help reduce complexity, improve data consistency, and streamline industrial processes within smart manufacturing environments.

Implementing Quality Control Through Unit-Level Data

Tracking Quality Factors at Each Manufacturing Station

Production monitoring captures key performance factors on the shop floor, including output rates, machine uptime, cycle times, and product quality (manufacturing KPIs and operational efficiency metrics). Quality monitoring focuses on indicators such as defect rates, scrap levels, defect types, and pass/fail results from inspections.

Operators and supervisors can receive real-time alerts when out-of-spec conditions occur, enabling faster response to deviations (real-time quality control systems). Live visibility of production performance helps teams react quickly when processes drift from defined standards, helping to contain issues before they escalate. Alerts can also be configured to flag threshold breaches, such as excessive downtime or missed cycle targets.

The cost of poor quality is often estimated to range from approximately 5% to 30% of gross sales, depending on the industry and operational maturity. To monitor and improve quality performance, manufacturers rely on metrics such as defect rates (the proportion of units that do not meet quality standards), Defective Parts Per Million (PPM), and First Pass Yield (FPY), which measures the percentage of products that meet specifications without requiring rework or scrap (quality performance metrics in manufacturing).

Root Cause Analysis Using Unit-Level History

Root cause analysis (RCA) focuses on identifying underlying causes of production issues rather than addressing symptoms (advanced root cause analysis in manufacturing). Production anomalies can arise from one or more process states and often result from interactions across multiple variables in the manufacturing system.

By leveraging unit-level traceability data, organizations can reconstruct detailed production histories and analyze the sequence of events leading to defects or failures. Advanced analytical approaches, including techniques from process mining, machine learning, and Semantic Web technologies, can be used to identify patterns, relationships, and deviations within complex datasets.

Production states may include conditions such as buffer states, equipment status, work-in-progress levels, and material transfer events. When combined with traceability data, these methods can support a more integrated approach to identifying root causes and improving process reliability (data-driven manufacturing optimization).

Predictive Quality Models Based on Traceability Data

Machine learning algorithms (AI in manufacturing and predictive analytics) analyze historical quality and traceability data to develop models that estimate future quality outcomes. Methods such as Random Forest, Support Vector Regression, and deep learning architectures are commonly applied to support quality prediction in manufacturing processes.

These models can identify patterns and anomalies associated with defects based on historical and contextual production data (predictive quality analytics). When integrated into manufacturing systems, predictive analytics tools can be used to forecast quality performance and highlight risk factors that may affect product quality (predictive quality analytics).

Validation and Reliability Measurement

Testing Digital Twin Accuracy Against Physical Units

Validation involves comparing data from digital twins with corresponding physical systems to assess their accuracy and reliability for real-world applications (digital twin validation methods). Various frameworks and methodologies exist to evaluate the level of similarity between physical assets and their digital representations, often using statistical analysis, simulation models, and performance metrics.

In practice, organizations may use simulation data alongside real-world observations in controlled test environments to calculate engineering Key Performance Indicators (KPIs) and correlation metrics, helping quantify how closely the digital model reflects actual system behavior.

Measuring Traceability System Performance

Measurement traceability refers to the ability to link measurement results through an unbroken chain of documented calibrations to recognized reference standards, as defined by ISO (ISO measurement traceability standards). This ensures consistency, accuracy, and comparability of measurements across time, locations, and teams.

Case Examples of Unit-Level Implementation

Several solutions demonstrate unit-level traceability by capturing and decoding Data Matrix codes on products moving through high-speed production lines using synchronized lighting and real-time processing techniques (high-speed traceability systems).

In another example, an automotive Tier 1 manufacturer integrated machine learning models within digital twin systems to optimize process parameters based on raw material quality and machine data, significantly reducing the need for manual intervention (AI-driven manufacturing optimization).

Compliance and Audit Readiness

Manufacturing compliance ensures that production processes meet legal, safety, and quality standards established by regulatory bodies such as OSHA, FDA, and EPA: regulatory compliance in manufacturing.

Non-compliance can result in significant financial penalties, operational disruptions, and reputational risks. Emerging regulations – such as those related to environmental reporting (e.g., PFAS substances) – are increasing the need for robust traceability, audit readiness, and data transparency across manufacturing operations.

Continuous Improvement Through Feedback Loops

Closed-loop systems feed operational insights back into engineering and manufacturing processes. Data collected from real-world operations flows into digital twins, enabling continuous refinement of models and processes (digital twin feedback loops).

This feedback loop allows teams to identify and address potential issues earlier in the lifecycle, supporting more proactive decision-making, predictive maintenance, and improving overall production performance.

Turning Traceability into Measurable Value

Unit-level traceability plays a critical role in enabling reliable digital twin implementations in smart manufacturing. By tracking individual components through IoT-enabled systems, real-time data synchronization mechanisms and resilient data infrastructure, organizations can improve visibility into production processes.

This approach supports more precise quality control, enables predictive capabilities, and enhances root cause analysis. It can also contribute to defect reduction, improved manufacturing KPIs, and support compliance with regulatory requirements when implemented effectively.

By leveraging high-granularity manufacturing data and unit-level data, manufacturers gain actionable insights that help continuously improve production processes. These systems can deliver measurable benefits, including reduced waste, improved operational efficiency, and stronger data-driven decision-making in modern industrial environments.

Read more: NDC12 FDA Rule: Drug Identification & Supply Chain Impact